ExpoTech's Future AI Generated Data Centers in Bangladesh

Ghatail, Tangail

4,200 Acres of LAND

1050 MW (AC) or 1.05 GW (AC)

Electricity:

Solar Power Plants

Wind Energy Power Plants

Thermal Power Plants

Waste-to-Energy

Solar Energy Storage System (SESS)

Cryogenic Energy Storage

Cooling:

Jamuna River flowing by the side of the land

Moddho Nila

6,000 Acres of LAND

1,500 MW (AC) or 1.5 GW (AC)

Electricity:

Solar Power Plants

Wind Energy Power Plant

Thermal Power Plant

Biomas Power Plant

Hydrogen Power Plant

Cryogenic Energy Storage

Solar Energy Storage System (SESS)

Cooling:

Desalination of Naf River

Char Khondokar, Char South Khondokar & Char Ramnarayan, Shonagazi, Feni

10,000 Acres of LAND

2,500 MW (AC) or 2.5 GW (AC)

Electricity:

Solar Power Plants

Wind Energy Power Plants

Thermal Energy Power Plant

Hydrogen Power Plant

Cryogenic Energy Storage

Solar Energy Storage System (SESS)

Cooling:

Feni & Meghna River

What makes an AI data center different?

Unlike traditional data centers that host mixed enterprise applications, ExpoTech AI data centers are optimized for specialized compute-intensive tasks like model training, fine-tuning, and AI inference workloads that demand dense GPU clusters, high-volume east-west traffic, and continuous data movement through AI pipelines. The rise of deep learning, generative AI (GenAI), and large language models (LLMs) has expanded enterprise infrastructure well beyond the limits of traditional CPU-based general-purpose computing environments. Training AI demands large amounts of data and intensive processing, which in turn requires thousands of GPUs working simultaneously, high-capacity terabit-scale networking, and dependable access to massive datasets. Even routine inference demands high concurrency, low-latency, and model-aware routing that older architectures cannot support.

As enterprises deploy larger models, integrate AI across business workflows, and expand real-time inference use cases, AI data centers have become foundational to:

- performance,

- governance, and

- enterprise long-term AI strategy.

AI data centers diverge from traditional designs in five major ways:

- GPU-accelerated compute: AI workloads require parallel computation, delivered by GPUs, TPUs, custom accelerators and specialized AI chips. These processors deliver the tensor-level throughput needed for training and inference. Tensor operations are mathematically complex, involving multi-dimensional arrays used in ML and deep learning computations. AI data centers depend on clusters of GPUs or accelerators for complex parallel neural network calculations. These systems require high-bandwidth interconnects, synchronization, and distributed training frameworks such as the NVIDIA Collective Communications Library (NCCL) or the Message Passing Interface (MPI), as GPUs process models concurrently.

- High-density racks and substantial power consumption:A conventional legacy rack may draw 8-10kW of power. Modern AI racks routinely exceed 40-120kW, driving major new requirements in electrical distribution, thermal design, and facility sitting for electrical grid access. AI workloads generate east-west network traffic during training, as model parameters, data batches, and updates are shared between CPUs, servers, and storage. This involves non-blocking switch fabrics, low-latency interconnects like InfiniBand or 800GbE, and traffic management to prioritize GPU-to-GPU traffic.

- Next-generation interconnects:Training workloads generate extreme east-west traffic. AI data centers rely on 400-800GbE, InfiniBand, which is specialized, high-speed 400-800Gbps network fabric, and high-performance switch fabrics to minimize latency between accelerators to keep training and inference pipelines saturated. Training and inference rely on quick access to vast data, but many enterprises have outdated infrastructure and low data readiness. They use parallel file systems, high-throughput storage, tiered NVMe architectures, or a combination, yet this hampers AI progress more than compute issues.

- Hotter, denser thermal profiles: GPU clusters generate concentrated heat zones that exceed the cooling capacity of traditional air cooling. AI data centers must integrate hybrid or advanced cooling to maintain safe operating thermal profiles. AI data centers consume more power and generate more heat than traditional data centers because GPUs run continuously at high utilization. To maintain operations and avoid throttling, they use specialized cooling, such as liquid cooling or rear-door heat exchangers, and advanced power setups, such as dedicated feeds with on-site substations or smart distribution, for 40kW+ racks.

- Workload orchestration and model-aware traffic: AI introduces new traffic forms, including model-to-model communication, vector retrieval, real-time inference, and edge-cloud flows. High-bandwidth, low-latency networking and segmentation are essential for AI. Effective traffic-aware orchestration, real-time monitoring, and policy enforcement are crucial for hybrid AI deployments. Traffic management tools become key operational elements for AI workload and model-aware traffic. AI systems introduce new regulatory and isolation requirements for sensitive artifacts and complex multi-tenant GPU pool risks, prompting ExpoTech AI data centers to implement stronger security, governance, and visibility controls. These include model-aware security, tenant isolation from Layer 4 to 7, traffic inspection, API governance, fine-grained access controls, and runtime observability. These measures protect AI assets, enforce safe access, and ensure reliable operation in shared high-performance environments.

How does power and cooling work at scale?

Energy is now one of the biggest constraints in AI adoption. AI racks run hotter, denser, and continuously under full load. Cooling typically accounts for 35-40% of total power consumption in AI data centers. Operators must design around high-power density, specialized cooling, thermal zoning and locating near reliable and cost-effective electricity supplies.

- High power density: A single GPU server can draw 3-7kW. A fully populated rack can reach 80-120kW. This impacts substation requirements, power distribution within the data center and redundancy designs (N+1, 2N, or grid/renewables hybrid)

- Hybrid and liquid cooling: Air cooling alone is insufficient. AI environments must typically integrate direct liquid cooling (DLC), which conducts heat about 3,000 times more efficientlythan air, immersion cooling for extreme density, hot/cold rack aisle containment so systems can work more efficiently and heat reuse systems for building heat, heating networks, etc.)

- Thermal zoning: GPU clusters create localized heat zones that require fine-grained thermal monitoring and dynamic cooling allocation to specific locations rather than the entire data center.

- Location strategy: Because of power constraints, many facilities are sited near hydroelectric or renewable sources, or in cooler regions if possible, or in regions with grid surplus.

Design and operational challenges

AI data centers introduce complexity across compute, data, and operations:

- Power and heat constraints : Facilities often reach power limits before space constraints. GPUs draw more power at peak load, requiring careful balancing of energy, redundancy, and cooling. Consequently, upgrades like new substations and cooling need extensive planning, permitting, and sometimes redesigns, turning capacity boosts into multi-year projects, occasionally leading to multi-region federated architectures.

- Training pipeline complexity : Distributed AI training requires tight GPU synchronization. A lagging or failing GPU can slow the entire pipeline. Engineers monitor performance, networks, and jobs to understand how architecture affects processing. This adds operational complexity, especially when workloads shift or datasets rapidly grow.

- Scalability limits : AI environments require near-linear infrastructure scaling, but layers don’t grow equally. Networking fabrics can saturate under heavy east-west traffic, and storage systems struggle to feed GPUs with needed data bandwidth for training. These mismatches often become chokepoints, impacting performance.

- Data readiness : All enterprises have data, but most are not in an AI-compatible form. Converting raw, unstructured, or siloed data into clean, labeled, and consistent training inputs takes significant effort. Inconsistent metadata, missing lineage, and unverified quality delay dataset onboarding and hinder AI teams in maintaining reliable feature pipelines. This gap between “data available” and “data usable” is a major barrier to scaling AI.

- Security and governance : AI introduces new security demands due to assets such as models, checkpoints (snapshots), and GPU resources, which pose risks that traditional controls can’t handle. Models can drift unnoticed, embeddings may leak sensitive information, and checkpoints may contain valuable IP that needs protection. Shared GPU environments require strong isolation to prevent cross-tenant access. AI workloads require governance, runtime inspection, and model-aware security to monitor behavior across the entire pipeline.

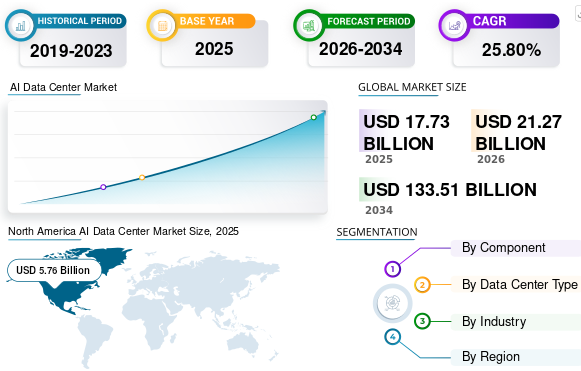

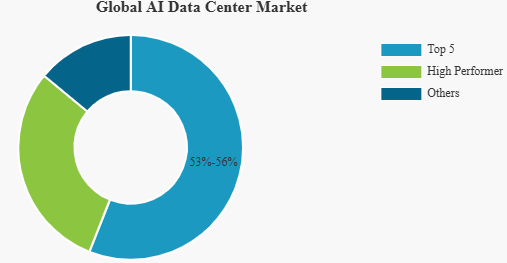

The evolution of AI in data centers

The journey of AI in data centers has evolved from rudimentary automation to the adoption of complex machine learning (ML) and neural networks that forecast, adapt, and proactively respond to fluctuating data demands and infrastructure health. This evolution highlights a strategic pivot towards building sustainable, efficient, and future-ready data infrastructures that are capable of self-management and real-time optimization.

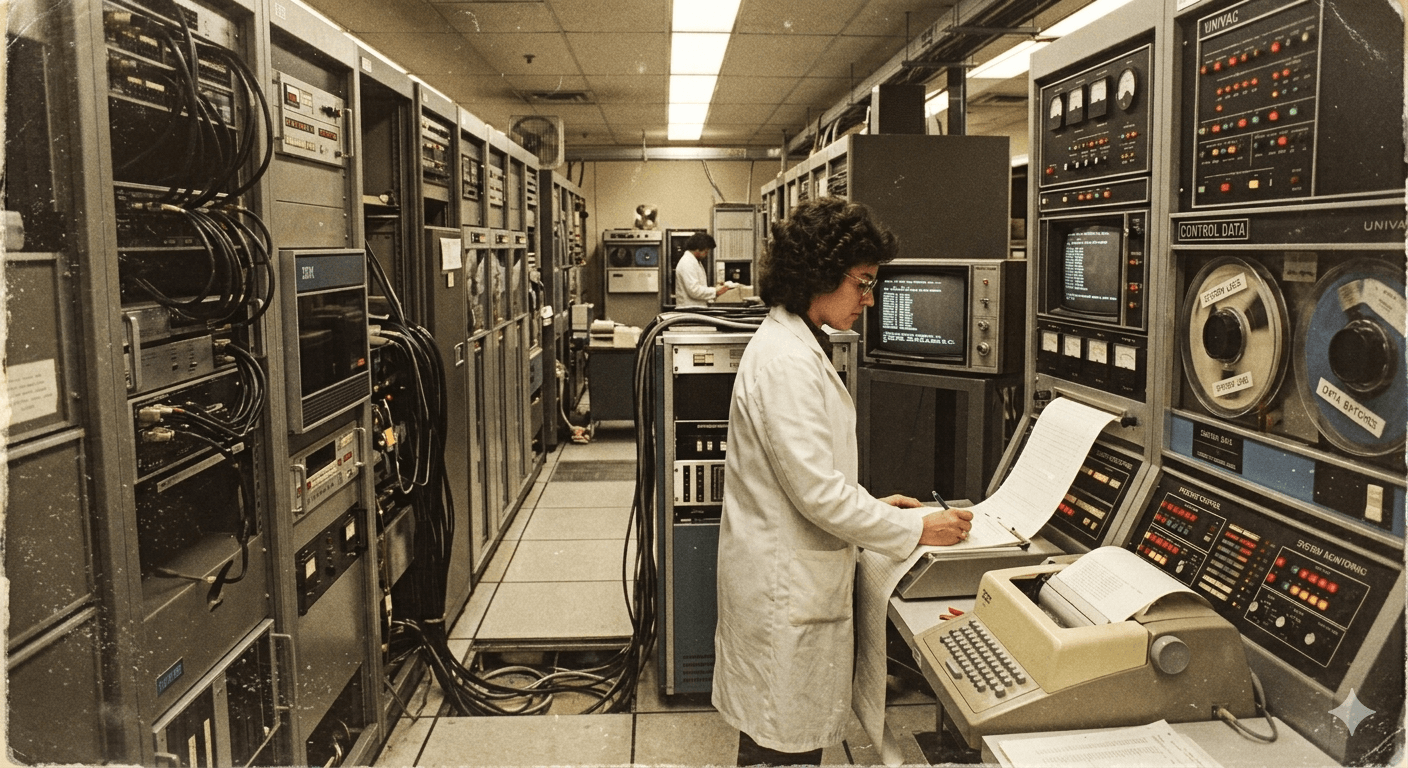

A historical insight into AI in data centers

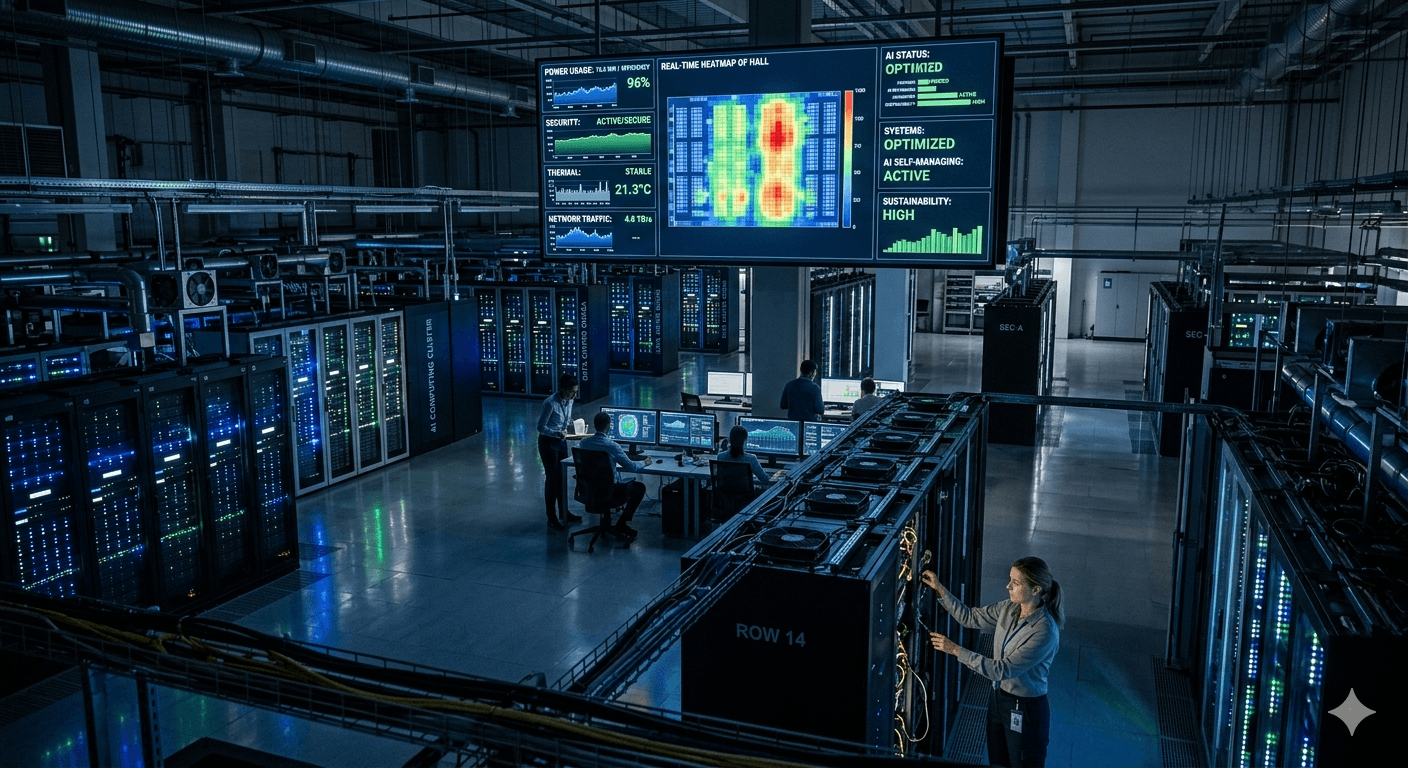

Modern AI data centers: A futuristic view

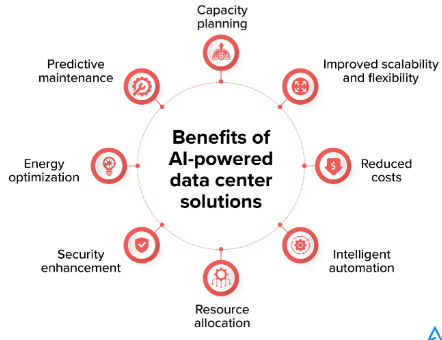

Benefits of artificial intelligence use in data centers

Integrating AI into data center operations offers manifold benefits:

Operational efficiency

AI significantly enhances workload distribution, automates maintenance tasks, and ensures optimal resource utilization, leading to substantial cost savings and freeing up human resources for strategic projects.

Predictive maintenance

AI’s predictive capabilities foresee potential equipment failures, facilitating timely interventions that reduce downtime and extend the life span of critical infrastructure components.

Energy efficiency

Through intelligent cooling systems and energy consumption optimization, AI substantially lowers the environmental impact of data centers, contributing to global sustainability efforts.

How to implement AI in data centers

To implement AI in data centers successfully, you need to assess the existing infrastructure, choose the right AI tools and platforms, ensure compatibility, train staff, and establish protocols for continuous learning and adaptation.

Steps to integrate AI into data centers

Integrating AI into data centers typically follows a structured process:

1. Assessment

2. Planning

3. Pilot testing

4. Full integration

5. Training and development

Understanding the role of hardware in AI implementation

The surge in HPC (High Performance Compute) demands a significant reengineering of data center infrastructure. Traditional data centers designed for CPU (Central Processing Unit) intensive tasks are now facing a challenge, as GPUs require more physical space, higher power for operation and cooling, and advanced cooling mechanisms to manage their increased heat output.

AI in data center software

Software, from operating systems to application-level solutions, plays a critical role in managing data flows, analyzing performance metrics, and ensuring security and compliance. Further, there are important data center compliance standards that must be considered.

Importance of software in AI data centers

Software is the driving force behind AI in data centers, enabling the various AI models and algorithms to run. It includes the entire stack, from the firmware, operating systems, and AI frameworks to the orchestration layers that manage resources and workload scheduling.

Different types of software used in AI data centers

AI data centers utilize a diverse range of software, including:

Machine learning platforms

Data analytics software

Automation and orchestration tools

How AI improves data center software efficiency

AI enhances software efficiency by enabling predictive analytics for load balancing, automating routine maintenance tasks, and providing intelligent insights for decision-making, thus reducing the need for manual intervention, and increasing overall efficiency.

The future of AI in data centers

The future of AI in data centers is marked by continuous innovation, with emerging technologies like quantum computing and 5G connectivity poised to further enhance AI’s capabilities. As we enter the mainstream wave of AI, enterprises are moving quickly to evaluate how AI can be used to accelerate business and reduce operational costs. The integration of AI will become more pervasive, driving not only operational efficiencies but also enabling data centers to play a crucial role in advancing AI research and development across various sectors.

Anticipated developments in AI data centers

The anticipation for future AI developments includes the integration of more advanced machine learning algorithms, enhanced natural language processing for automated customer service, and the adoption of AI for sustainable resource management. There are best practices for data centers that must be followed to ensure the safety and efficiency of infrastructure.

The role of AI in data center transformation

AI is set to transform data centers by enabling autonomous operations, real-time analytics for decision-making, and the facilitation of advanced services like Infrastructure as a Service (IaaS) and Platform as a Service (PaaS).

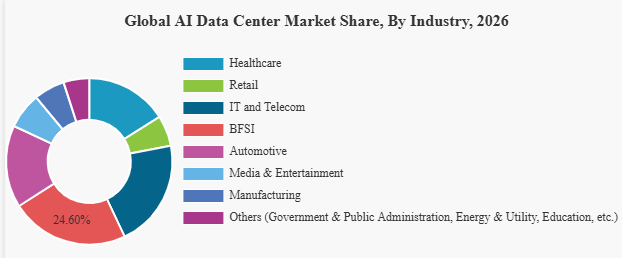

The impact on businesses and industries

AI's impact on businesses and industries is profound, with data centers becoming not only service providers but also innovation hubs, driving advancements in AI and offering competitive advantages through improved services and efficiencies.

Should you consider artificial intelligence when choosing a data center?

When selecting a data center, the incorporation of AI capabilities is a critical factor to consider. Data centers equipped with AI technologies, like those offered by Flexential, provide businesses with a competitive edge through enhanced efficiency, reliability, and scalability. These AI-driven data centers are better positioned to meet the dynamic demands of the digital economy.

As businesses contemplate their data center needs, evaluating a provider’s AI capabilities should be paramount. AI-driven data centers not only promise increased benefits and improved operational efficiency and sustainability but also ensure that businesses can rapidly adapt to technological advancements and market demands.

The integration of AI into data centers represents a pivotal shift towards more intelligent, efficient, and sustainable operations. As we look to the future, the role of AI in data centers will only grow, driven by the increasing demand for data processing and the need for businesses to remain competitive in a rapidly evolving digital landscape. For companies like Expotech Data Centers—starting an AI integration in data center operations—is not just about enhancing operational efficiency; it’s about shaping the future of technology and ensuring that businesses have the infrastructure they need to thrive in the digital age.

By embracing AI, data centers can transcend traditional limitations, paving the way for innovations that will define the next era of digital transformation. The journey towards AI-driven data centers is not without its challenges, but the potential rewards for businesses, society, and the environment make it a venture worth pursuing.

The AI Shift Why Data Center Build Strategy Must Evolve

AI and high-performance computing workloads are dramatically increasing rack densities. While legacy enterprise setups operated at 5–10 kW per rack, AI clusters can demand 30 kW, 50 kW, or even beyond 100 kW per rack in advanced deployments.

- Power Architecture must support higher loads with minimal transmission losses.

- Cooling Systems must move from traditional air cooling toward liquid cooling or hybrid systems.

- Floor Design and Structural Engineering must accommodate heavier equipment.

- Network Fabric must handle ultra-low latency and high bandwidth traffic.

A forward-thinking data center build accounts for these variables at the design stage, rather than retrofitting later at higher cost and risk.

Future-ready infrastructure is not reactive; it is engineered for what is coming next.

Building a Data Center for High-Density AI Key Considerations

The future of AI in data centers is marked by continuous innovation, with emerging technologies like quantum computing and 5G connectivity poised to further enhance AI’s capabilities. As we enter the mainstream wave of AI, enterprises are moving quickly to evaluate how AI can be used to accelerate business and reduce operational costs. The integration of AI will become more pervasive, driving not only operational efficiencies but also enabling data centers to play a crucial role in advancing AI research and development across various sectors.

1. Power Scalability from Day One

When building a data center, power provisioning is the backbone of the entire project. AI clusters require stable, high-capacity electrical systems, often with redundant feeds and intelligent distribution.

Key considerations include:

- Modular power blocks for phased capacity expansion

- Integration of renewable energy and BESS for grid resilience

- Advanced UPS systems designed for high-density loads

- Busbar trunking and efficient power distribution layouts

Scalability should be built into the electrical backbone, enabling incremental upgrades without operational disruption

2. Advanced Cooling Architectures

High-density AI environments generate immense heat. Conventional raised-floor air cooling may no longer suffice.

A modern data center build incorporates:

- Direct-to-chip liquid cooling

- Rear-door heat exchangers

- Immersion cooling for ultra-dense racks

Thermal management must be designed in tandem with structural and electrical systems. Cooling infrastructure cannot be an afterthought; it is a core design driver in AI-focused facilities

3. Flexible Floor Planning and Structural Readiness

AI hardware is heavier and more compact. When building a data center, floor loading capacity, rack placement flexibility, and cable routing pathways must accommodate future density increases.

Future-ready designs include:

- Higher floor load ratings

- Overhead cable management for airflow optimization

- Modular data halls that allow reconfiguration

- Clear zoning between high-density and standard-density racks

- Flexibility ensures the facility remains relevant even as hardware generations evolve.

Sustainability in a High-Density World

AI workloads increase energy consumption, but sustainability cannot be compromised.

A future-ready data center build integrates:

- Renewable power sourcing

- On-site solar integration, where it is feasible

- Battery Energy Storage Systems (BESS)

- High-efficiency cooling systems with reduced water usage

- Waste heat recovery strategies

Designing for sustainability from the outset reduces operational expenditure while aligning with ESG commitments.

The goal is not simply to power AI; it is to power it responsibly.

The Role of Modular and Prefabricated Design

Speed-to-market is a critical competitive advantage. Modular and prefabricated solutions are increasingly becoming central to building a data center.

Benefits include:

- Reduced on-site construction timelines

- Higher quality control through factory-built systems

- Lower risk of delays

- Faster commissioning

A modular approach allows scalable growth, enabling organizations to deploy capacity in phases aligned with demand.

ExpoTech AI data centers feature electrical systems akin to those of industrial power plants, not just office server rooms. They utilize high-capacity busbars, high-voltage distribution, and selective UPS deployments to handle racks drawing tens of kilowatts continuously. Designing for these loads means embracing HPC principles (minimal oversubscription, robust power quality measures) rather than traditional enterprise assumptions. The result is that new data centers by cloud giants and colocation providers are being purpose-built for high density.

The evolution from traditional to AI data centers is characterized by surging power densities and a complete rethink of cooling and power delivery. Where a classic data center focused on reliability for moderate loads, an AI center focuses on performance and throughput for massive loads, necessitating HPC-grade solutions. We see higher-density racks (10× the power), advanced liquid cooling (water on chips, or even fully submerged servers), and creative power strategies (selective UPS, high-voltage distribution) all working in concert to enable the next generation of AI computing. The obsolescence of purely air-cooled, low-density facilities is becoming evident as they cannot economically support modern AI clusters. In their place, a new breed of high-density, liquid-cooled data centers is rising, pioneered by industry leaders: NVIDIA’s reference designs pushing 1 MW per rack in the future.

ExpoTech’s tomorrow’s data centers will look more like supercomputers under the hood. Those that adapt will efficiently power the AI revolution; those that don’t will be left with empty racks and underutilized space, a testament to how quickly technology outgrows the status quo. The evolutionary shift to AI-centric design is not just a niche trend but a fundamental change in data center architecture — one that is happening now on a global scale, wherever AI workloads demand top performance.

Only a few years ago, rack power densities were modest and predictable. Most enterprise environments operated comfortably below 5 kW per rack, and even large-scale deployments were designed around conservative thermal assumptions. Airflow was abundant, margins were wide, and cooling strategies evolved slowly alongside incremental improvements in compute performance.

That equilibrium no longer exists. Average rack densities have climbed into the low to mid-teens, and forward-looking deployments are accelerating well beyond that baseline. AI and HPC clusters are now driving rack power past 30 kW, with many environments planning for 50 kW and higher as GPU density continues to increase. In purpose-built AI facilities, densities approaching or exceeding 100 kW per rack are no longer theoretical. They are actively shaping design decisions today.

This shift is not the result of a single trend. It reflects the convergence of GPU-intensive AI training and inference, high-density HPC architectures, and the return of critical workloads from public cloud platforms into enterprise and colocation facilities. Together, these forces have created a clear divergence in thermal reality. While many traditional data centers still operate near 12 to 15 kW per rack, hyperscale and AI-focused environments are already running at more than double that level.

Air was once the quiet constant of data center design. Today, it is being pushed to the limits of its physical capability. The way servers draw in, move, and reject air has fundamentally changed. As a result, airflow is no longer a background assumption. It has become a primary design constraint that will determine how successfully data centers scale to support AI-driven workloads.

The difference between legacy servers and modern AI systems is structural, not incremental. AI platforms introduce a new thermal and electrical reality driven by several factors:

- Thermal density: GPUs generate significantly higher heat flux per unit area than traditional CPUs.

- Power concentration: AI systems draw power at the rack and chassis level rather than distributing load across the room.

- Environmental sensitivity: Modern accelerators are more sensitive to airflow stability, pressure balance, and contamination.

Real-world deployments now reflect this shift. Operators are already running AI racks in the 70 to 75 kW range using rear-door heat exchangers and liquid-assisted cooling architectures. These are not pilot experiments. They are production environments supporting revenue-generating workloads.

In the AI Blueprint reference design, cooling and power are planned at the pod level, with each pod supporting over 2.2 MW of IT load across tightly integrated power and cooling systems.

This approach highlights a broader industry shift away from monolithic halls toward modular, high-density building blocks that can scale rapidly without destabilizing the facility. The Blueprint reinforces this trend by treating liquid cooling as the primary thermal pathway, not an enhancement. In the reference architecture:

- Each AI rack receives dedicated liquid cooling

- Modular CDUs deliver over 6 MW of liquid IT heat removal per pod

- Air handles only the remaining residual load, typically 20 to 25 kW per rack, under tightly managed conditions

What’s important to note is that air flow and air management aren’t going away. The shift we’re seeing does not eliminate air. It redefines its role. Air becomes a precision-managed system that supports stability, cleanliness, and resilience rather than carrying the full thermal burden.

AI-ready facilities must be designed for:

- Rapid increases in density that outpace traditional retrofit cycles

- Modular architectures that isolate risk and enable fast deployment

- Hybrid cooling systems that intentionally balance air and liquid

- Operational models that treat airflow quality as a reliability variable

As generative AI workloads scale, so do the physical and thermal requirements of GPU infrastructure:

- Nvidia’s Blackwell GB300 racks will hit 163 kW per rack in 2025

- Vera Rubin NVL144 systems may require 300+ kW per rack in 2026

- Rubin Ultra NVL576 racks are projected to exceed 600 kW per rack by 2027

- Google’s Project Deschutes has already unveiled a 1 MW rack design

These advances are only possible with direct-to-chip liquid cooling. DLC systems remove heat directly from the silicon die, enabling dense GPU configurations and allowing data centers to scale performance without thermal throttling.

While a standard enterprise data center may operate at 10–50 MW, a single AI training cluster can require 30 times that, making AI-focused power planning essential.

Recent analysis from McKinsey, NVIDIA, and the International Energy Agency all point to AI consuming more than 3.5% of global electricity by 2030, with terawatt-scale demand becoming a serious planning scenario.

This kind of demand necessitates long-lead utility coordination, grid reinforcement, and next-gen electrical design. Hyperscalers aren’t simply looking for AI data center infrastructure—they’re looking for energy partners who can plan, permit, and deliver power years ahead of schedule.

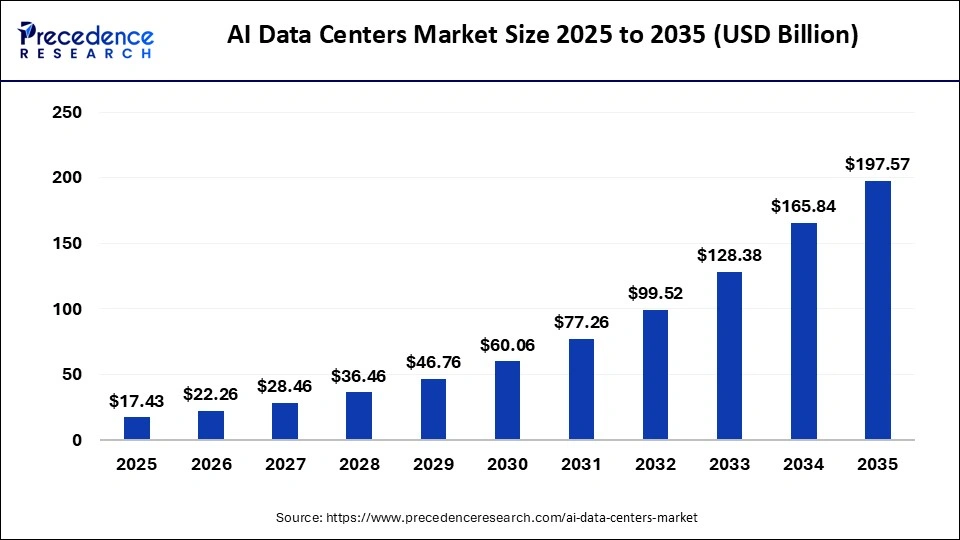

The economic impact of AI data centers

The unprecedented demand for data processing capabilities has made specialized data centers a strategic asset for companies seeking innovation and competitiveness. However, this growth also brings significant economic challenges, including high upfront investments, substantial operational costs, and the need for long-term sustainable solutions. For instance, Microsoft announced plans to invest $80 billion in 2025 to build new data centers dedicated to training AI models and deploying AI- and cloud-based applications.

Cost savings and ROI from AI-driven optimizations

Implementing AI in data centers offers numerous opportunities to reduce operational costs. The key factors contributing to cost reduction and improved return on investment include:

- Energy efficiency: AI enables real-time adjustments to energy consumption and optimizes cooling systems, leading to lower electricity costs.

- Automation of operational processes: AI-driven automation eliminates repetitive manual tasks and effectively manages workload distribution, preventing resource underutilization and maximizing infrastructure performance.

- Reduced maintenance costs: Predictive maintenance allows data centers to anticipate failures and repair needs, avoiding unexpected or unnecessary stoppages and extending equipment lifespan.

- Intelligent scalability: AI enables data centers to scale dynamically, automatically adjusting capacity based on demand. It also helps predict demand surges, ensuring proper resource allocation and reducing costs associated with underutilization or infrastructure overload.

What’s next for AI and machine learning models in data centers?

The continuous advancements in AI and machine learning models require data centers to evolve to support more demanding, complex, and dynamic workloads. Future trends point to:

- Increased demand for specialized computing power: With the exponential growth of generative AI and deep learning models, there will be a rising need for high-performance processing units such as GPUs, TPUs, and NPUs (Neural Processing Units) capable of handling complex real-time operations.

- Greater focus on energy efficiency: AI models consume significant energy during training and inference phases. As a result, there will be broader adoption of efficiency strategies, including intelligent workload management, advanced cooling systems, and renewable energy sources.

- Evolution of edge computing: To meet real-time processing needs, data centers will increasingly combine centralized and distributed operations, leveraging edge computing solutions to reduce latency and enhance efficiency.

- Adoption of hyperconverged architectures: Integrating storage, computing, and networking into a single infrastructure will enable more efficient resource management, simplifying operations and reducing operational costs.

How AI is transforming data center design and architecture

AI is driving a shift in the design and architecture of data centers on multiple levels:

- Optimization of server layout and distribution: Advanced algorithms analyze usage patterns to suggest more efficient configurations, reducing energy consumption and improving airflow.

- Modular and flexible architectures: Modular designs will enable scalability, allowing for easy expansion as business needs grow without requiring significant structural changes.

- Intelligent cooling: New designs integrate liquid cooling systems and dynamic cooling mechanisms, where AI-powered sensors monitor and adjust airflow to maintain optimal thermal operations, reducing costs and environmental impact.

- Automated, real-time security: Future data centers will leverage AI to detect and respond to cyber threats, ensuring data integrity and compliance with security regulations.

With these transformations, data centers will become increasingly autonomous, resilient, and optimized to support the growth of AI sustainably and efficiently.

How are AI data centers impacting job roles in IT and data management?

AI is transforming the role of IT professionals, automating repetitive tasks and allowing teams to focus on strategic areas. Infrastructure management is becoming more efficient, requiring new data analysis and security skills. Traditional functions are evolving into more specialized roles focused on supervision and process optimization.

How can AI data centers support continuous improvement in operations?

AI data centers can support continuous operations improvement through advanced analytics, predictive maintenance, and automation. AI-driven monitoring systems can analyze large volumes of data in real time to identify inefficiencies, optimize energy consumption, and enhance cooling strategies. Predictive analytics help anticipate hardware failures, reducing downtime and maintenance costs. Additionally, AI can automate routine tasks such as workload balancing and resource allocation, ensuring optimal performance and scalability. These capabilities enable a data-driven approach to continuous improvement, increasing efficiency, lowering operational costs, and enhancing overall reliability. AI and continuous improvement will increasingly reinforce each other, driving innovation and efficiency across various sectors.